-

May 2025 - Aug 2025

Data Science Intern

TGS

Houston, TX, United States

Developed and optimized a large-scale machine learning foundation model for geological data.

Improved the model's architecture and fine-tuned it on industry datasets, enabling more accurate predictions for subsurface formation analysis.

-

Indiana University Bloomington

Bloomington, IN, United States

Working in the Cognitive AI lab under Dr. Zoran Tiganj, focusing on in-context learning mechanisms in Transformers. Investigating temporal dynamics of attention heads in Large Language Models, drawing parallels to human memory.

Sep 2024 - present

Research Assistant

-

July 2023 - June 2024

Software Engineer

Radisys India Ltd.

Bangalore, Karnataka, India

Worked with the OAM (Operations, Administration, and Maintenance) team to improve the 5G Network infrastructure.

Handled requests across various modules like

CM (Configuration Management): Added new configurations in YANG model according to 3GPP specifications and

PM (Performance Management): Handled performance counters by modifying the codebase in C++ and Python.

-

Technocolabs Softwares Inc.

Indore, Madhya Pradesh, India (remote)

Used Machine Learning Algorithms to develop models on existing data to help predict used car prices and determine whether a used car is worth the posted price.

Preprocessed the large dataset in Python and performed EDA. Used BigQuery for creating data warehouse and Google Cloud Composer for managing ETL workflow.

July 2022 - Sep 2022

Data Engineer Intern

-

June 2022 - July 2022

Research Intern

IISER Bhopal

Bhopal, Madhya Pradesh, India

Worked under the mentorship of Dr. Sunando Datta.

Studied target genes of YAP transcription factor in

Hippo Signaling Pathway in order to inhibit tumor growth.

Implemented web scraping in Python and did

data visualization of the target genes.

Also wrote a python script for cell migration analysis and deployed the web application using Flask.

-

IIT Mandi

Mandi, Himachal Pradesh, India

Worked under the mentorship of Dr. Tulika P Srivastava on this Computational Biology Project.

Designed indices to increase throughput of

Illumina sequencing.

Wrote a Perl script for the sequence of barcodes and did quality analysis for error reduction.July 2021 - Nov 2021

Research Project

about me

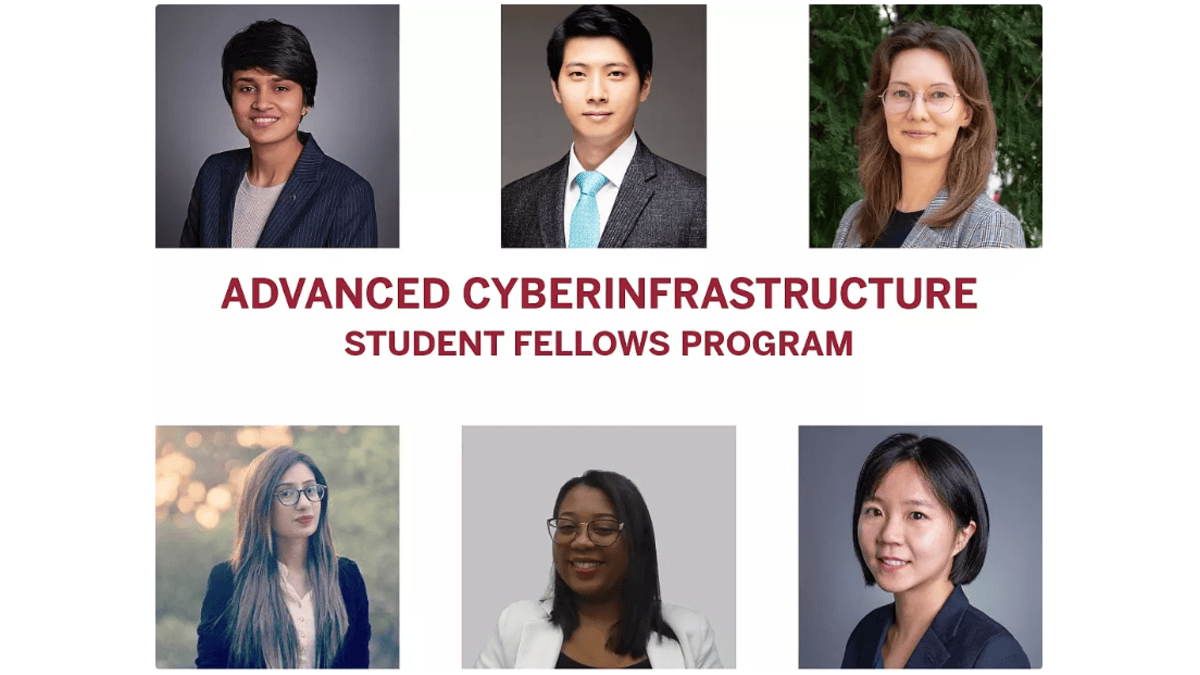

I am a graduate student at Indiana University Bloomington pursuing an M.S. in Data

Science

and have completed my B.Tech. in Bio-Engineeering

with Minor in Computer

Science Engineering

from IIT Mandi in 2023.

I currently work in the Cognitive AI Lab at Indiana University Bloomington, where my

research focuses on mechanistic interpretability, in-context learning, and AI safety

and alignment in large language models. My work aims to better understand model

behavior and improve the reliability of modern AI systems.

Previously, I worked as a Data Science Intern at TGS, where I developed and

finetuned foundation models for large scale geological datasets.

Prior to this, I worked as a full time Software Engineer at Radisys.

Talk to me about AI, Rationality and EA, and I'd be happy!

Name

Anooshka Bajaj

Address

Bloomington, IN United States

anooshkabajaj@gmail.com